AI Development: Cost Optimization Strategies for Sustainable Growth

March 12, 2026

Enterprises worldwide are accelerating their adoption of advanced technologies, with AI development now a central element of digital transformation and operational innovation. Yet, the escalating AI cost, driven by token usage, compute infrastructure, and talent investment, is raising questions about sustainable investment and value realization.

This article examines actionable cost optimization strategies that help leaders manage AI expenses while preserving long-term growth potential.

Understanding where and how money is spent in AI systems is foundational to effective cost management and optimization. The unique dimensions of AI workloads demand real-time cost visibility, as traditional cloud monitoring tools are often insufficient.

The main cost dimensions and their impact:

| Cost Dimension | Why It’s Important | Key Metrics to Track |

| Token Consumption | AI APIs are typically billed per token, so inefficient prompts or high output significantly drive up spend without adding corresponding business value. | Input tokens, output tokens, tokens per feature/endpoint. |

| GPU/TPU Utilization | Compute accelerators are costly, and idle or under-utilized resources that waste budget; high utilization aligns spending with performance needs. | Instance hours, saturation %, memory usage, provisioned vs. active GPU usage. |

| Provisioned Throughput / Reserved Capacity | Unused provisioned capacity becomes a sunk cost; tracking usage rates helps right-size infrastructure. | Utilization ratio, effective hourly rates, reserved vs. actual throughput. |

| Model Invocation Performance | Slow endpoints, errors, or retries can inflate cost via repeated token use and unnecessary compute cycles. | Latency (P50/P95/P99), error, and retry rates. |

| Multi-Tenant Cost Allocation | Without proper tagging and segmentation, shared AI resources obfuscate accountability and make it difficult to allocate costs by project or team. | Requests tagged by tenant/feature, cost allocation by project/team. |

Cost visibility enables teams to spot inefficiencies early and enact targeted interventions before AI cost spikes escalate out of control.

AI development involves several core cost factors that enterprises must understand to budget and optimize effectively. Each of these components contributes distinctly to total expenses and impacts of financial planning for scalable AI systems.

Key Cost Factors in AI Development:

By understanding these core cost drivers, enterprises can more accurately forecast budgets and identify opportunities to control spending as AI adoption grows.

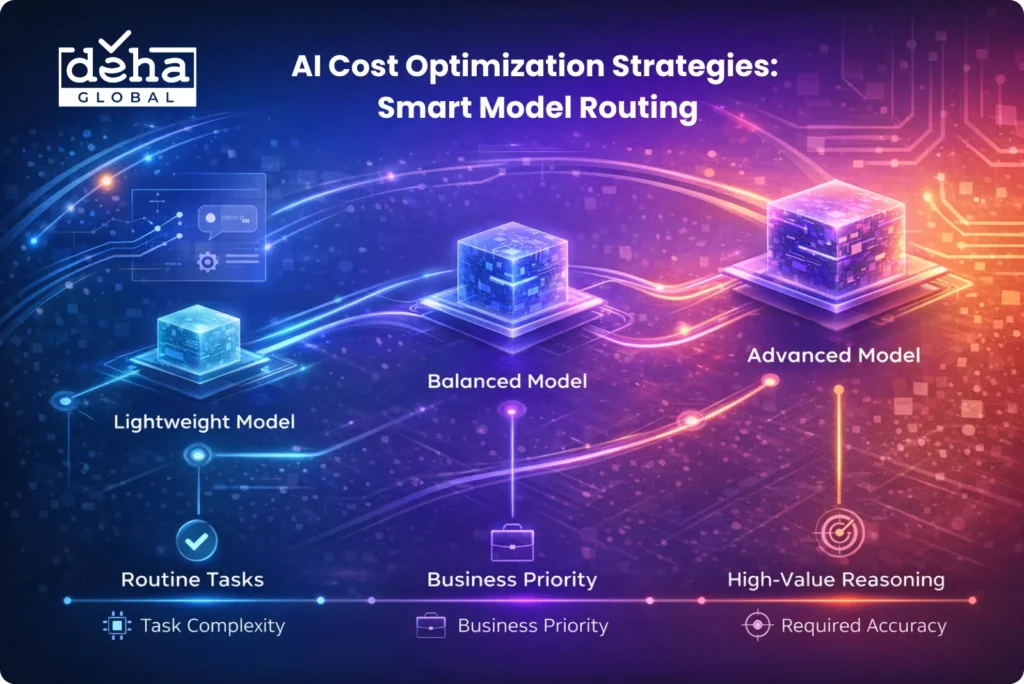

Intelligent model routing allows enterprises to direct requests to different models based on task complexity, business priority, and required accuracy. Instead of defaulting to a high-cost, large-scale model for every request, organizations can dynamically select lightweight models for routine tasks and reserve advanced models for high-value reasoning scenarios.

To implement this strategy effectively, teams should:

This approach reduces unnecessary compute consumption and flattens AI development cost growth as usage scales, ensuring infrastructure spend increases proportionally with business value rather than model size.

Token usage directly impacts AI development cost because most APIs charge per input and output token processed. Inefficient prompts, redundant instructions, and overly verbose outputs significantly inflate expenses without delivering proportional value.

Organizations can optimize prompt design by:

Refined prompt engineering shortens interaction cycles and reduces token waste, which directly lowers operational AI cost, especially in high-volume generative workflows.

Centralized telemetry pipelines provide real-time visibility into token usage, GPU utilization, latency, and billing metrics across AI systems. Without this centralized oversight, cost anomalies often go undetected until invoices arrive, making reactive control difficult.

To follow this strategy, enterprises should:

Granular telemetry enables proactive cost management, helping finance and engineering teams prevent runaway spend and maintain disciplined AI development practices.

Retrieval Augmented Generation architectures often increase token usage because large contextual documents are passed into models. If retrieval depth is not optimized, unnecessary context inflates processing time and token charges.

Optimization methods include:

Improving RAG efficiency reduces contextual overhead, speeds response times, and lowers total AI development cost while maintaining output relevance.

Many AI workloads, such as report generation or background analytics, do not require real-time execution. Running these tasks individually wastes GPU cycles and increases per-task processing cost.

Enterprises can:

Batch execution maximizes hardware utilization and reduces idle compute, leading to measurable cost savings in large-scale AI environments.

Over-provisioned infrastructure is one of the most significant contributors to AI cost inflation. Without workload-aligned provisioning, enterprises pay for idle GPU hours and unused capacity.

Best practices include:

Infrastructure enforcement aligns compute supply with actual usage, preventing unnecessary overspending and improving long-term sustainability of AI development.

AI environments often serve multiple products, teams, or business units, which can obscure accountability for cost consumption. Without tagging and allocation mechanisms, CFOs struggle to identify which initiatives generate value versus waste.

Organizations should:

Application-level tagging increases transparency and financial accountability, enabling leaders to prioritize high-ROI AI initiatives and discontinue inefficient spending areas.

In Conclusion

Understanding the structure of AI cost and applying focused optimization strategies allows enterprises to transform AI development from an experimental expense into a strategic growth engine. Real-time monitoring, cost-aware system design, and close collaboration between finance and technical teams are critical to maintaining efficiency as usage expands. A proactive and iterative optimization approach will help organizations sustain innovation while keeping AI costs under control.