Generative AI Development: Roadmap for Successfully

April 16, 2026

The promise of generative AI development is extraordinary, with analysts estimating it could add up to $4.4 trillion annually to the global economy – but that value only materializes when implementation is executed with discipline and structure.

This guide provides executives with a clear, business-first path to implement Generative AI at scale. It ensures AI initiatives are tightly aligned with strategic priorities, deliver measurable value, and are governed responsibly – rather than fragmented pilots or uncontrolled experimentation.

By following this sequence, organizations can capture early wins while building the foundation for long-term, scalable impact.

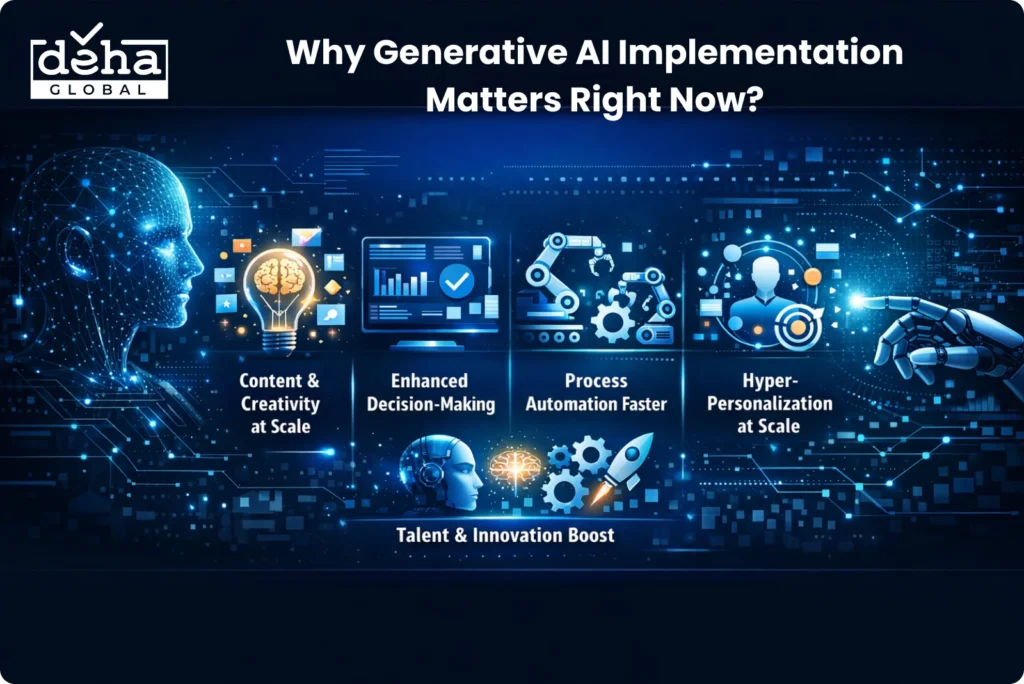

Traditional AI systems were largely built to analyze existing data and surface insights — useful, but ultimately reactive. Generative AI represents a fundamental shift in that dynamic, because these systems actively create new content: text, images, code, designs, and even strategic analysis that previously required human expertise.

As a result, organizations that implement GenAI effectively are not just becoming more efficient — they are unlocking entirely new ways of working, communicating, and delivering value to customers.

The business case for acting now is substantial. Below are the key reasons why generative AI development has become a strategic priority across industries:

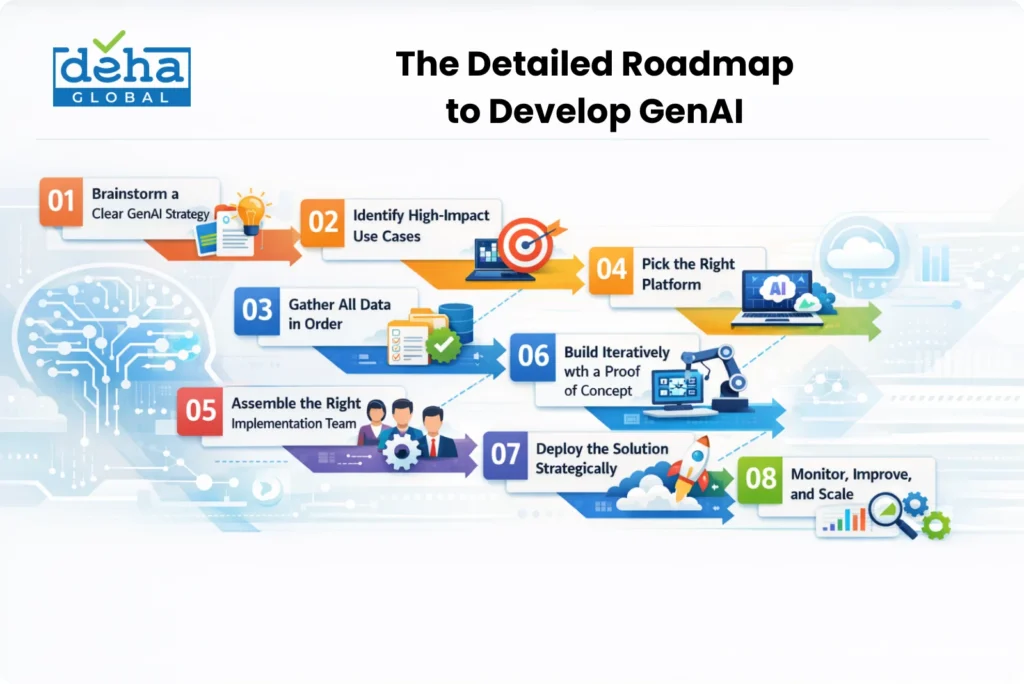

Strategic clarity is the foundation that separates successful implementations from expensive experiments. Before evaluating any platform, assembling a team, or collecting data, every stakeholder involved needs to agree on one fundamental question: what specific business problem will this generative AI initiative solve?

The most effective way to build that clarity is to translate abstract ambitions into concrete, measurable objectives tied directly to organizational priorities. Rather than framing goals as vague aspirations such as “improve efficiency,” leadership teams should define specific KPIs, for example, “reduce document processing time from 4 hours to 30 minutes” or “increase sales-qualified leads by 25% through personalized outreach campaigns“.

These quantifiable targets serve two purposes simultaneously: they guide every subsequent implementation decision, and they secure executive buy-in by demonstrating a clear and defensible ROI case from day one.

Verdict: Organizations that skip strategic alignment often build technically functional AI tools that solve the wrong problems — resulting in low adoption, wasted budget, and leadership disillusionment before meaningful ROI is ever realized.

Not every AI idea is worth pursuing first. Successful organizations focus their initial GenAI investment on use cases that deliver maximum business impact with manageable implementation complexity — and they use a structured process to identify those opportunities.

A practical starting point is to run cross-functional workshops with representatives from marketing, operations, customer service, and IT, then evaluate each idea on a simple scoring matrix: rate both impact potential (1–10) and implementation complexity (1–10). High-impact, low-complexity options become the natural candidates for early pilots.

It is also worth noting that according to McKinsey research, approximately 75% of the total value GenAI use cases could deliver is concentrated across just four business areas: customer operations, marketing and sales, software engineering, and R&D – making these domains the most strategically fertile ground for early investment.

Verdict: Without use case prioritization, teams default to high-visibility but low-impact projects – consuming implementation capacity on initiatives that generate demonstrations rather than measurable business outcomes.

Step 3: Gather All Data in Order

Generative AI depends heavily on data quality. Poor data often causes project failure. This step is usually the most resource-intensive, taking 60–80% of total effort.

Data Audit and Access

- Identify all relevant data sources (support tickets, docs, chat logs)

- Map data volume, type, and flow across systems

- Detect data silos (enterprises often use 400+ apps)

- Evaluate current infrastructure (data lake, warehouse)

- Prepare at least 10,000 labeled samples for fine-tuning

Data Cleaning and Preparation

- Remove duplicates and fix errors

- Handle missing values and standardize formats

- Ensure clean, consistent datasets

- Split data: 70% training | 20% validation | 10% testing

- Keep datasets separate to avoid overfitting

Data Governance and Compliance

- Assign data owners and access control

- Establish governance team (legal, IT, business)

- Check and reduce data bias

- Apply privacy methods (anonymization, etc.)

- Ensure compliance (GDPR, HIPAA, CCPA)

Verdict: Skipping rigorous data preparation produces models that perform well in testing and fail in production — and because data issues compound over time, the cost of fixing them post-deployment typically exceeds the cost of getting it right upfront.

Step 4: Pick the Right Platform

Platform selection is one of the most consequential decisions in the generative AI journey, as it directly determines implementation speed, cost structure, compliance capability, and long-term flexibility. There is no universally correct answer — the right choice depends on an organization’s technical maturity, data sensitivity requirements, and the complexity of the target use case.

The three primary approaches are outlined below:

Platform Approach Best Suited For Key Considerations Pre-trained APIs (OpenAI GPT, Azure OpenAI, Google Vertex AI) Fast deployment, standard use cases Third-party data governance risks; review data processing agreements carefully Enterprise AI Platforms (IBM WatsonX, Azure ML, Google Cloud AI) Scalability, compliance, oversight Higher setup cost, stronger security, and enterprise support Custom Model Development (TensorFlow, PyTorch, Hugging Face fine-tuning) Highly specialized or proprietary applications Requires deep ML expertise and significant compute resources

Organizations with strict data governance requirements may also consider hybrid architectures – using pre-trained APIs for non-sensitive tasks while keeping confidential data processing entirely internal.

Verdict: A mismatched platform creates technical debt from day one — locking organizations into infrastructure that cannot scale, integrate, or comply with evolving security requirements without costly rearchitecting.

Generative AI projects are inherently cross-disciplinary, and assembling the right mix of people is just as critical as choosing the right technology. A technically impressive model will underperform – or fail outright — without the business context, domain expertise, and governance oversight that only a well-structured team can provide.

The core roles all implementation teams need include:

Beyond the core team, the organization also needs to formalize AI governance accountability — whether through a dedicated ethics officer, a governance committee, or existing leadership roles. Clear usage policies and documented escalation procedures for problematic outputs are not optional — they are foundational to responsible, trustworthy AI deployment.

Verdict: Underinvesting in team composition leads to capability gaps that surface at the worst possible moments — typically during deployment or when the first production incident requires rapid diagnosis.

POC Scope

Validation with Real Users

Evaluate across 4 key areas:

Iteration Process

Early iteration reduces risk. Fixing mistakes early is faster and cheaper than scaling a flawed solution.

Verdict: Organizations that skip directly to full deployment eliminate the feedback loop that catches flawed assumptions early — turning avoidable prototype failures into expensive production failures.

Moving from a validated prototype to full production deployment is the moment where all previous planning either pays off or unravels. A rushed or poorly structured launch can erode user trust quickly, making recovery significantly harder than a carefully managed rollout would have been.

The deployment should follow a phased progression that limits risk while maximizing organizational learning at each stage:

Each phase should run for 4–6 weeks with clearly defined success criteria – user satisfaction scores, technical benchmarks, and measurable business outcomes – before advancing to the next stage. Alongside the technical rollout, user training and change management deserve equal investment. Research shows that insufficient training contributes to more than 60% of AI implementation failures, and resistance typically stems not from the technology itself, but from employees who feel unprepared or threatened.

Framing AI consistently as a tool for augmentation – one that reduces repetitive work and frees people for higher-value contributions – is one of the most effective strategies for driving genuine adoption.

Verdict: Unstructured deployment without staged rollout and rollback planning exposes the entire user base to unvalidated outputs – eroding trust in ways that are significantly harder to recover from than a controlled pilot failure.

Deployment marks the beginning of the operational phase – not the end of the implementation journey. Sustaining business value from generative AI requires ongoing monitoring, structured improvement cycles, and a deliberate scaling strategy.

Build a dual-layer monitoring framework that tracks:

Executive dashboards that surface these metrics clearly help maintain leadership engagement and reinforce the case for continued investment. AI models can also drift over time as data patterns evolve or new edge cases emerge.

Three operational practices that keep scaled deployments stable:

Verdict: AI systems left unmonitored degrade silently — model drift, shifting data patterns, and emerging edge cases accumulate until user experience deteriorates visibly, by which point remediation requires substantially more effort than continuous oversight would have.

Even the most carefully designed generative AI initiatives can stumble — and in many cases, the obstacles are predictable and preventable. Across enterprise deployments, a consistent set of missteps tends to surface repeatedly, each capable of derailing an otherwise promising project.

The table below summarizes the most common pitfalls, the underlying reasons they occur, and the practical steps organizations can take to avoid them.

| Pitfall | Why It Happens | How to Avoid It |

| Skipping the strategy phase | Teams feel pressure to move fast and jump to building before defining clear business objectives | Return to Step 1 — define measurable KPIs and tie every implementation decision back to a specific business goal |

| Underestimating data requirements | The complexity and volume of data preparation are routinely miscalculated during project scoping | Allocate 60–80% of project resources to data collection, cleaning, and governance — and treat this as a non-negotiable baseline. |

| Ignoring end-user needs | Solutions are built by technical teams in isolation, without ongoing input from the people who will use them | Involve representative users at every development stage, not just during final UAT |

| Inadequate change management | Training and internal communication are treated as low-priority line items rather than core success drivers | Dedicate sufficient budget and time to user education, workflow integration support, and ongoing adoption tracking |

| Perfectionism paralysis | Teams hold back from launching until the solution is “perfect,” losing momentum and stakeholder confidence in the process | Launch with a “good enough” MVP and refine continuously based on real-world feedback — iteration beats delayed perfection |

| Neglecting governance and ethics | Oversight frameworks are deferred until problems emerge, rather than being built in from the start. | Define governance guardrails, content filters, bias audits, and ethical review processes before the first line of model code is written. |

| Inadequate technical infrastructure | Compute, storage, and network requirements are underestimated during planning, leading to performance degradation at scale | Model infrastructure needs to be proactively planned for expected usage growth before it becomes an operational crisis |

In Conclusion

The roadmap for generative AI development presented here is, at its core, a framework for disciplined decision-making — one that helps organizations move from early enthusiasm to measurable results by addressing strategy, data, team structure, prototyping, and governance in the right sequence. Each phase builds directly on the previous one, creating a compounding foundation of organizational capability and trust that makes scaling significantly more achievable.

For enterprises willing to invest the time and rigor this process demands, the reward is not merely operational efficiency — it is a durable competitive advantage in a market where AI fluency is rapidly becoming a baseline expectation.